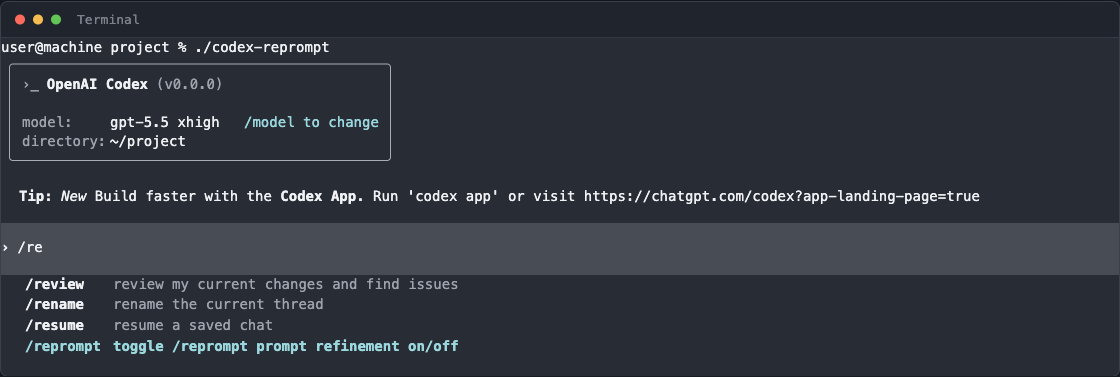

Reprompt: a Codex fork that rewrites your prompt before the agent runs

Why a skill could not enforce 'rewrite-only' behavior in Codex, and how a TUI-level interceptor with grounded refinement and per-task profiles solves it instead.

| Repo | ravikanchikare/codex-reprompt |

| Upstream | openai/codex |

| Surface | TUI-only interceptor on /reprompt |

| Default refiner | o4-mini via the Responses API |

| Configuration | ~/.codex/config.toml + ~/.codex/reprompt/<profile>.toml |

Why a skill was not enough

The first attempt to get “rewrite-only” behavior out of Codex was a skill. It worked sometimes. It did not hold up.

A skill provides guidance, not a hard execution boundary. Five problems showed up immediately:

- Skills cannot reliably stop Codex from solving the task when the model reads the user request as executable work.

- Skills cannot disable tools — file edits, shell, tests, browser actions all stay live.

- Skills depend on trigger quality. They may not load, or they may load alongside stronger instructions that override them.

- “Rewrite only” conflicts with the model’s default bias to act on user requests.

- Grounding the prompt requires light project exploration, which can drift into task execution.

A skill can shape behavior. It cannot guarantee enforcement. For strict rewrite-only behavior the right design is a dedicated layer that sits outside the agent loop, owns the rewrite step end-to-end, and never gets handed the tools that would let it act.

What Reprompt does

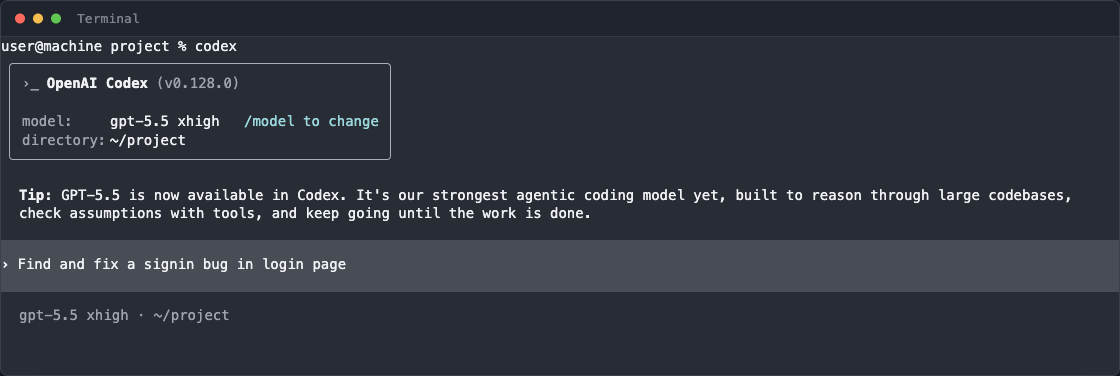

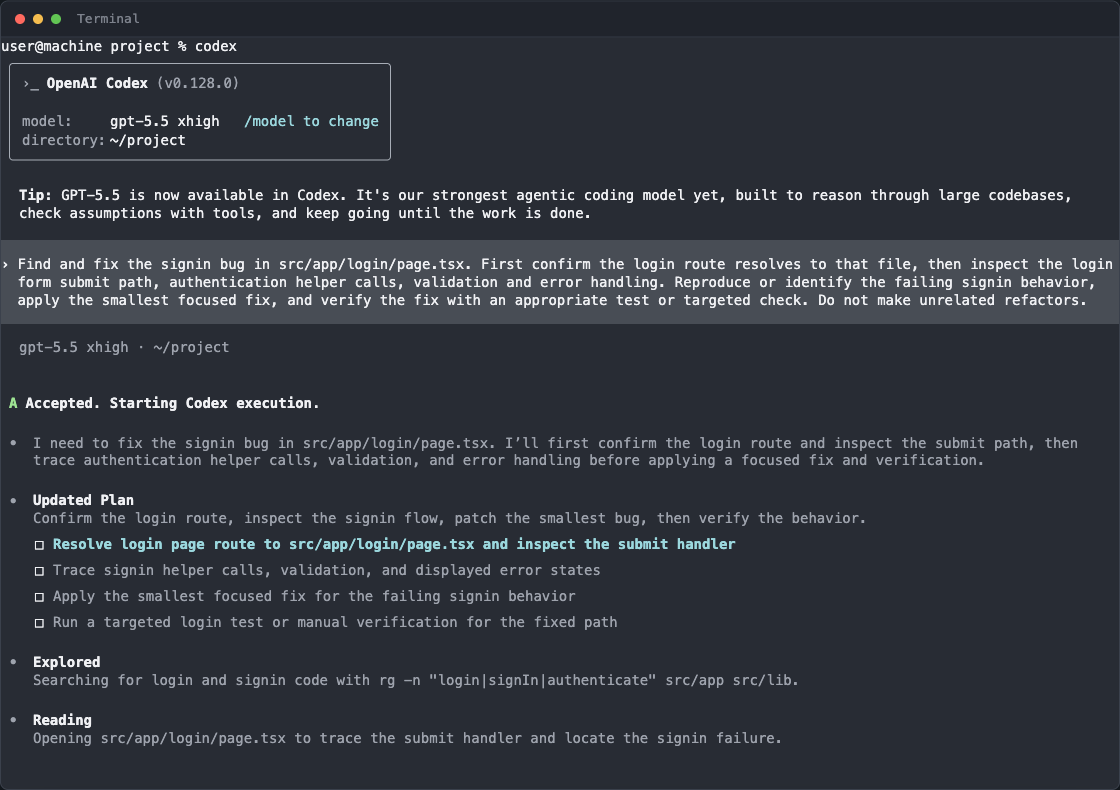

Reprompt is a Codex setting that intercepts user input before the main agent runs. It uses the existing ChatGPT or API-key authentication, calls a configured refiner model, grounds the request in the local repository, and rewrites the prompt into a clearer, more structured task.

The motivation is simple. People working in a project rarely attach every required file, symbol, or directory the agent should inspect. They describe the task at a high level and expect the agent to infer the rest. In practice a well-grounded, well-structured prompt produces measurably better runs.

This was a manual ritual before — pasting a rough requirement into ChatGPT, asking it to rewrite, copying the result back into Codex. Reprompt brings that ritual inside the tool.

When the user submits, Reprompt fires first. It reads the working directory, pulls in recent conversation turns, expands @file and $skill mentions, and asks the refiner model for a structured rewrite.

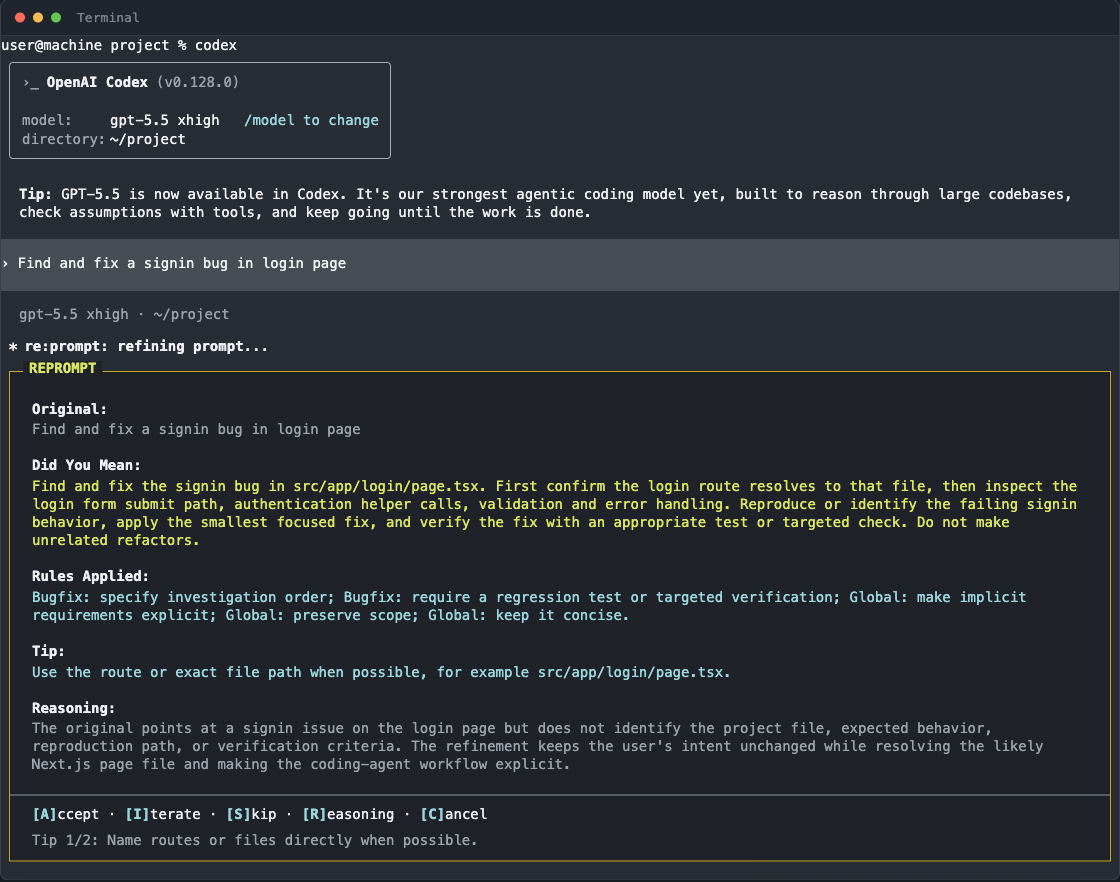

The overlay is the load-bearing UI element. It shows the original prompt, the refined version, the rules that were applied, a tip, and the model’s reasoning. The user can accept, iterate, skip, or cancel — keyboard-only, no mouse, no tab-out.

Once accepted, Codex executes against the refined prompt. The refined version is what the agent sees in its history; the original is preserved only for context.

How it works

The fork adds roughly 3,400 lines, almost entirely concentrated in codex-rs/tui/src/reprompt/. Core Codex logic is largely untouched.

TUI-level capture

When /reprompt is enabled, every user submission below the min_length threshold gets passed through the refinement pipeline before reaching the main agent loop.

Repo-aware context build

The refiner sees a cached project tree (depth 4, ~2000 chars), the most recent conversation turns, content-matched relevant files, and any extracted skills, plugins, or apps from session state.

Structured Responses API call

A single call to the configured model (default o4-mini) at /responses, using a JSON schema that returns refinedPrompt, appliedRules, taskType, reasoning, tips, and a wasSubstantiveChange flag.

Overlay before commit

If the change is substantive, the overlay opens. If not, the original prompt passes through untouched. Auto-accept after 15s by default; the timer is configurable.

The refinement call is a separate, stateless request. It does not have tools, it does not have shell, it cannot edit files. The only thing it can produce is text shaped by the JSON schema. That shape is the enforcement that a skill could not give.

What can be configured

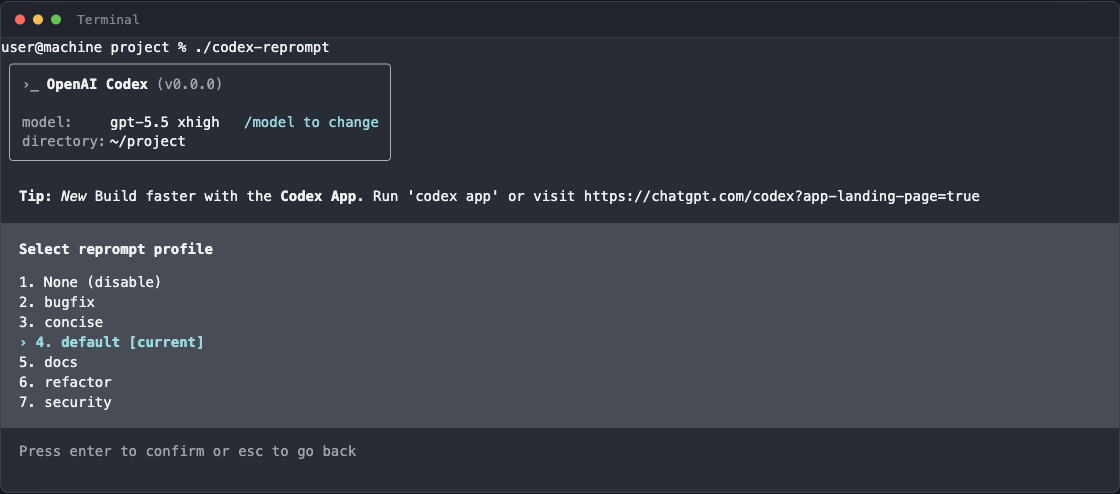

Reprompt has two configuration surfaces. The main ~/.codex/config.toml holds defaults; named profiles in ~/.codex/reprompt/<name>.toml override per task type.

[reprompt]

enabled = true

model = "o4-mini"

profile_name = "default"

min_length = 20 # skip short messages

context_turns = 4 # prior turns to include

auto_accept_delay = "15s"

show_diff = false

# Grounding controls

include_relevant_files = true

relevant_files_max_count = 8

relevant_files_max_chars = 600

include_project_structure = true

project_structure_max_depth = 4

project_structure_max_chars = 2000

project_structure_cache_ttl_secs = 30

# Safety

redact_secrets = true

redact_high_entropy = true

redaction_entropy_threshold = 4.5Profiles add a system_prompt, an optional task_type tag (bugfix, feature, refactor, security, analysis, review), and rule lists that are grouped by task type. The seven shipped profiles are None, bugfix, concise, default, docs, refactor, and security.

Switching profile is a /reprompt command away. The slash-picker also exposes /reprompt-insights for retrospective coaching — a tool that scans past refinements and surfaces patterns in how the user tends to under-specify.

Why this shape

Three design choices held up across iterations.

The refiner is its own model call. Mixing refinement into the main agent’s system prompt re-creates the skill problem — the model decides whether to obey. A separate call with a separate model and a JSON schema gives a hard contract.

Grounding is bounded by character budgets, not file counts. Files vary wildly in size. Capping by characters keeps the refiner’s context predictable across repositories.

Profiles, not prompt templates. A bugfix needs an investigation order and a regression check. A refactor needs scope preservation. A security review needs threat-modeling questions. Encoding those as task-typed rule lists is more legible than maintaining seven different system prompts.

Takeaways

Skills give guidance, not enforcement

A skill cannot disable tools or stop a model that interprets the user request as executable work. Hard boundaries belong in the wrapper, not in instructions.

Refinement is a different job from execution

Treating prompt rewriting as its own model call — with its own system prompt, its own model, and structured output — keeps the planner from leaking into the doer.

Grounding beats verbosity

A short prompt with the right file path, the right symbol, and the right task type produces better runs than a long prompt that asks the agent to figure out the rest.

Profiles encode taste

Bugfix, refactor, security, and docs are different jobs with different rules. A per-task profile is a cheap way to bake that taste into every prompt.

The tool ships as a fork rather than a plugin because the boundary it needs lives below the slash-command surface. Skills, hooks, and config do not currently expose a “before-the-agent-runs” hook in upstream Codex. Until they do, the fork is the smallest place to put a layer that has to run first and has to run every time.